What were the numbers?

Often in business, we're presented with charts where the y-axis is unlabeled because the presenter wants to conceal the numbers. Are there ways of reconstructing the labels and figuring out what the data is? Surprisingly, yes there are.

Given a chart like this:

you can often figure out what the chart values should be.

The great Evan Miller posted on this topic several years ago ("How To Read an Unlabeled Sales Chart"). He discussed two methods:

- Greatest common divisor (gcd)

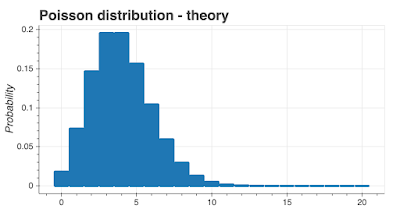

- Poisson distribution

In this blog post, I'm going to take his gcd work a step further and present code and a process for reconstructing numbers under certain circumstances. In another blog post, I'll explain the Poisson method.

The process I'm going to describe here will only work:

- Where the underlying data is integers

- Where there's 'enough' range in the underlying data.

- Where the maximum underlying data is less than about 200.

- Where the y-axis includes zero.

The results

Let's start with some results and the process.

I generated this chart without axes labels, the goal being to recreate the underlying data. I measured screen y-coordinates of the top and bottom plot borders (187 and 677) and I measured the y coordinates of the top of each of the bars. Using the process and code I describe below, I was able to correctly recreate the underlying data values, which were \([33, 30, 32, 23, 32, 26, 18, 59, 47]\).

How plotting packages work

To understand the method, we need to understand how a plotting package will render a set of integers on a chart.

Let's take the list of numbers \([1, 2, 3, 5, 7, 11, 13, 17, 19, 23]\) and call them \(y_o\).

When a plotting package renders \(y_o\) on the screen, it will put them into a chart with screen x-y coordinates. It's helpful to think about the chart on the screen as a viewport with x and y screen dimensions. Because we only care about the y dimensions, that's what I'll talk about. On the screen, the viewport might go from 963 pixels to 30 pixels on the y-axis, a total range of 933 y-pixels.

Here's how the numbers \(y_o\) might appear on the screen and how they map to the viewport y-coordinates. Note the origin is top left, not bottom right. I'll "correct" for the different origin.

The plotting package will translate the numbers \(y_o\) to a set of screen coordinates I'll call \(y_s\). Assuming our viewport starts from 0, we have:

\[y_s = my_o\]

Let's just look at the longest bar that corresponds to the number 23. My measurements of the start and end are 563 and 27, which gives a length of 536. \(m\) in this case is 536/23, or 23.3.

There are three things to bear in mind:

- The set of numbers \(y_o\) are integers

- The set of numbers \(y_s\) are integers - we can't have half a pixel for example.

- The scalar \(m\) is a real number

Integer only solutions for \(m\)

In Evan Miller's original post, he only considered integer values of \(m\). If we restrict ourselves to integers, then most of the time:

where gcd is the greatest common divisor.

To see how this works, let's take:

and

These numbers give us:

which gives \(m = 8\), which is correct.

If we could guarantee \(m\) was an integer, we'd have an answer; we'd be able to reconstruct the original data just using the gcd function. But we can't do that in practice for three reasons:

- \(m\) isn't always an integer.

- There are measurement errors which means there will be some uncertainty in our \(y_s\) values.

- It's possible the original data set \(y_o\) has a gcd which is not 1.

In practice, we gather screen coordinates using a manual process which will introduce errors. At most, we're likely to be off by a few pixels for each measurement, however, even the smallest error will mean the gcd method won't work. For example, if the value on the screen should be 500 but we might incorrectly measure it as 499, this small error means the method fails (there is a way around this failure that will work for small measurement errors.)

If our original data set has a gcd greater than 1, the method won't work. Let's say our data was:

and:

we would have:

which has a gcd of 16, which is an incorrect estimate of \(m\). In practice, the odds of the original data set \(y_o\) having a gcd > 1 are low.

The real killer for this approach is the fact that \(m\) is highly likely in practice to be a real number.

Real solutions for \(m\)

The only way I've found for solving for \(m\) is to try different values for \(m\) to see what succeeds. To get this to work, we have to constrain \(m\) because otherwise there would be an infinite number of values to try. Here's how I constrain \(m\):

- I limit the steps for different \(m\) values to 0.01.

- I start my m values from just over 1 and I stop at a maximum \(m\) value. My maximum \(m\) value I get from assuming the smallest value I measure on the screen corresponds to a data value of 1, for example, if the smallest measurement is 24 pixels, the smallest possible original data is 1, so the maximum value for \(m\) is 24.

Now we've constrained \(m\), how do we evaluate \(y_s = my_o\)? First off, we define an error function. We want our estimates of the original data \(y_o\) to be integers, so the further away we are from an integer, the worse the error. For the \(i\)th element of our estimate of \(y_o\), the error estimate is:

\[\frac{y_{si}}{m_{estimate}} - \frac{y_{si}}{m_{estimate}}\]

we're choosing the least square error, which means minimizing:

\[ \frac{1}{n} \sum \left ( round \left ( \frac{y_{si}}{m_{estimate}} \right ) - \frac{y_{si}}{m_{estimate}} \right )^2 \]

in code, this comes out as:

sum([(round(_y/div) - _y/div)**2 for _y in y])/len(y)

Our goal is to try different values of \(m\) and choose the solution that yields the lowest error estimate.

The solution in practice

Before I show you how this works, there are two practicalities. The first is that \(m=1\) is always a solution and will always give a zero error, but it's probably not the right solution, so we're going to ignore \(m=1\). Secondly, there will be an error in our measurements due to human error. I'm going to assume the maximum error is 3 pixels for any measurement. To calculate a length, we take a measurement of the start and end of the bar (if it's a bar chart), which means our maximum uncertainty is 2*3. That's why I set my maximum \(m\) to be min(y) + 2*MAX_ERROR.

To show you how this works, I'll talk you through an example.

The first step is measurement. We need to measure the screen y-coordinates of the plot borders and the top of the bars (or the position of the points on a scatter chart). If the plot doesn't have borders, just measure the position of the bottom of the bars and the coordinate of the highest bar. Here are some measurements I took.

Here are the measurements of the top of the bars (_y_measured): \([482, 500, 489, 541, 489, 523, 571, 329, 399]\)

Here are the start and stop coordinates of the plot borders (_start, _stop): \(677, 187\)

To convert these to lengths, the code is just: [_start - _y_m for _y_m in _y_measured]

The length of the screen from the top to the bottom is: _start - _stop = \(490\)

This gives us measured length (y_measured): \([195, 177, 188, 136, 188, 154, 106, 348, 278]\)

Now we run this code:

MAX_ERROR = 3

STEP = 0.01

ERROR_THRESHOLD = 0.01

def mse(y, div):

"""Means square error calculation."""

return sum([(round(_y/div) - _y/div)**2 for _y in y])/len(y)

def find_divider(y):

"""Return the non-integer that minimizes the error function."""

error_list = []

for _div in np.arange(1 + STEP,

min(y) + 2*MAX_ERROR,

STEP):

error_list.append({"divider": _div,

"error":mse(y, _div)})

df_error = pd.DataFrame(error_list)

df_error.plot(x='divider', y='error', kind='scatter')

_slice = df_error[df_error['error'] == df_error['error'].min()]

divider = _slice['divider'].to_list()[0]

error = _slice['error'].to_list()[0]

if error > ERROR_THRESHOLD:

raise ValueError('The estimated error is {0} which is '

'too large for a reliable result.'.format(error))

return divider

def find_estimate(y, y_extent):

"""Make an estimate of the underlying data."""

if (max(y_measured) - min(y_measured))/y_extent < 0.1:

raise ValueError('Too little range in the data to make an estimate.')

m = find_divider(y)

return [round(_e/m) for _e in y_measured], m

estimate, m = find_estimate(y_measured, y_extent)

This gives us this output:

Original numbers: [33, 30, 32, 23, 32, 26, 18, 59, 47]

Measured y values: [195, 177, 188, 136, 188, 154, 106, 348, 278]

Divider (m) estimate: 5.900000000000004

Estimated original numbers: [33, 30, 32, 23, 32, 26, 18, 59, 47]

Which is correct.

Limitations of this approach

Here's when it won't work:

- If there's little variation in the numbers on the chart, then measurement errors tend to overwhelm the calculations and the results aren't good.

- In a similar vein, if the numbers are all close to the top or the bottom of the chart, measurement errors lead to poor results.

- \(m < 1\), which as the maximum y viewport range is usually in the range 500-900 pixels, it won't work for numbers greater than about 500.

- I've found in practice that if \(m < 3\) the results can be unreliable. Arbitrarily, I call any error greater than 0.01 too high to protect against poor results. Maybe, I should limit the results to \(m > 3\).

I'm not entirely convinced my error function is correct; I'd like an error function that better discriminates between values. I tried a couple of alternatives, but they didn't give good results. Perhaps you can do better.

Notice that the error function is 'denser' closer to 1, suggesting I should use a variable step size or a different algorithm. It might be that the closer you get to 1, the more errors and the effects of rounding overwhelm the calculation. I've played around with smaller step sizes and not had much luck.

Future work

If the data is Poisson distributed, there's an easier approach you can take. In a future blog post, I'll talk you through it.

Where to get the code

I've put the code on my Github page here: https://github.com/MikeWoodward/CodeExamples/blob/master/UnlabeledChart/approxrealgcd.py