Changing the world through charts

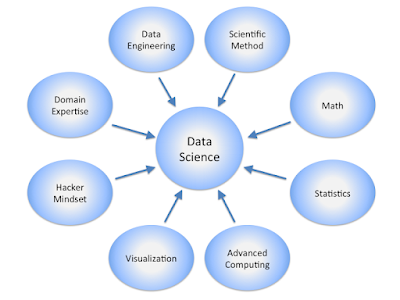

Two of the key skills of a data scientist are informing and persuading others through data. I'm going to show you how one man, and his team, used novel visualizations to illustrate the lives of African-Americans in the United States at the start of the 20th century. Even though they created their visualizations by hand, these visualizations still have something to teach us over 100 years later. The team's lack of computers freed them to try different forms of data visualizations; sometimes their experimentation was successful, sometimes less so, but they all have something to say and there's a lesson here on communication for today's data scientists.

I'm going to talk about W.E.B. Du Bois and the astounding charts his team created for the 1900 Paris exhibition.

(W.E.B. Du Bois in 1904 and one of his 1900 data visualizations.)

Who was W.E.B. Du Bois?

My summary isn't going to do his amazing life justice so I urge you to read any of these short descriptions of who he was and what he did:

To set the scene here's just a very brief list of some of the things he did. Frankly, summarizing his life in a few lines is ridiculous.

- Born 1868, Great Barrington, Massachusetts

- Graduate of Fisk University and Harvard - the first African-American to gain a Ph.D. from Harvard

- Conducted ground-breaking sociological work in Philadelphia, Virginia, Alabama, and Georgia

- His son died in 1899 because no white doctor would treat him and black doctors were unavailable

- Was the primary organizer of "The Exhibit of American Negroes" at the Exposition Universelle held in Paris between April and November 1900

- NAACP director and editor of the NAACP magazine The Crisis

- Debated Lothrop Stoddard, a "scientific racist" in 1929 and thoroughly bested him.

- Opposed US involvement in World War I and II.

- Life-long peace activist and campaigner, which led to the FBI investigating him in the 1950s as a suspected communist. They withheld his passport for 8 years.

- Died in Ghana in 1963.

Visualizing Black America at the start of the twentieth century

In 1897, Du Bois was a history professor at Atlanta University. His former classmate and friend, Thomas Junius Calloway, asked him to produce a study of African-Americans for the 1900 Paris world fair, the "Exposition Universelle". With the help of a large team of Atlanta University students and alumni, Du Bois gathered statistics on African-American life over the years and produced a series of infographics to bring the data to life. Most of the names of the people who worked on the project are unknown, and it's a mystery who originated the form of the plots, but the driving force behind the project was undoubtedly Du Bois. Here are some of my favorite infographics from the Paris exhibition.

The chart below shows where African-Americans lived in Georgia in 1890. There are four categories:

- Red - country and villages

- Yellow - cities 2,500-5,000

- Blue - cities 5,000-10,000

- Green - cities over 10,000

the length of the lines is proportional to the population and obviously, the chart shows the huge majority of the population lived in the country and villages. I find the chart striking for three reasons: it doesn't follow any of the modern charting conventions, it clearly represents the data, and it's visually very striking. My criticism is that the design makes it hard to visually quantify the differences, for example, how many more people live in the country and villages compared to cities 5,000-10,000? If I were drawing a chart with the same data today, I might use an area chart to represent the same data; it would quantify things better, but it would be far less visually interesting.

The next infographic is two choropleth charts that show the African-American population of Georgia counties in 1870 and 1880. Remember that the US civil war ended in 1865, and with the Union victory came freedom for the slaves. As you might expect, there was a significant movement of the now-free people. Looking at the charts in detail raises several questions, for example, why did some areas see a growth in the African-American population while other areas did not? Why did the highest populated areas remain the highest populated? The role of any good visualization is to prompt meaningful questions.

This infographic shows the income and expenditure of 150 African-American families in Georgia. The income bands are on the left-hand side, and the bar chart breaks down the families' expenses by category:

- Black - rent

- Purple - food

- Pink - clothes

- Dark blue - direct taxes

- Light blue - other expenses and savings

There are several notable observations from this chart: the disappearance of rent above a certain income level, the rise in other expenses and savings with rising income, and the declining fraction spent on clothing. There's a lot on this chart and it's worthy of greater study; Du Bois' team crammed a great deal of meaning into a single page. For me, the way the key is configured at the top of the chart doesn't quite work, but I'm willing to give the team a pass on this because it was created in the 19th century. A chart like this wouldn't look out of place in a 2022 report - which of itself is startling.

My final example is a comparison of the occupations of African-Americans and the white population in Georgia. It's a sort-of pie chart, with the upper quadrant showing African Americans and the bottom quadrant showing the white population. Occupations are color-coded:

- Red - agriculture, fishery, and mining

- Yellow - domestic and personal service

- Blue - manufacturing and mechanical industries

- Grey - trade and transportation

- Brown - professions

The fraction of the population in these employment categories is written on the slices, though it's hard to read because the contrast isn't great. Notably, the order of the occupations is reversed from the top to the bottom quadrant, which has the effect of making the sizes of the slices easier to compare - this can't be an accident. I'm not a fan of pie charts, but I do like this presentation.

Influences on later European movements - or not?

Two things struck me about Du Bois' charts: how modern they looked and how similar they were to later art movements like the Italian Futurists and Bauhaus.

At first glance, his charts look to me like they'd been made in the 1960s. The typography and coloring were obviously pre-computerization, but everything else about them suggests modernity, from the typography to the choice of colors to the layout. The experimentation with form is striking and is another reason why this looks very 1960s to me; perhaps the use of computers to visualize data has constrained us too much. Remember, Du Bois's mission was to explain and convince and he chose his charts and their layout to do so, hence the experimentation with form. It's quite astonishing how far ahead of his time he was.

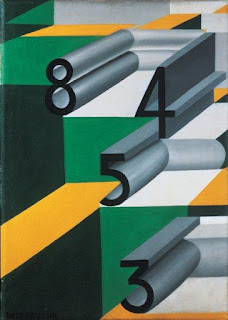

Italian Futurism started in 1909 and largely fizzled out at the end of the second world war due to its close association with fascism. The movement emphasized the abstract representation of dynamism and technology among other things. Many futurist paintings used a restricted color palette and have obvious similarities with Du Bois' charts, here are just a few examples (below). I couldn't find any reliable articles that examined the links between Du Bois' work and futurism.

|

|

Numbers In Love - Giacomo Balla

Image from WikiArt | Music - Luigi Russolo

Image from WikiArt |

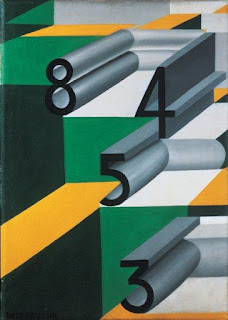

The Bauhaus design school (1919-1933) sought to bring modernity and artistry into mass production and had a profound and lasting effect on the design of everyday things, even into the present day. Bauhaus designs tend to be minimal ("less is more") and focus on functionality ("form follows function") but can look a little stark. I searched, but I couldn't find any scholarly study of the links between Du Bois and Bauhaus, however, the fact the Paris exposition charts and the Bauhaus work use a common visual language is striking. Here's just one example, a poster for the Bauhaus school from 1923.

(Joost Schmidt, Public domain, via Wikimedia Commons)

Du Bois' place in data visualization

I've read a number of books on data visualization. Most of them include Nightingale's coxcomb plots and Playfair's bar and pie charts, but none of them included Du Bois charts. Du Bois didn't originate any new chart types, which is maybe why the books ignore him, but his charts are worth studying because of their experimentation with form, their use of color, and most important of all, their ability to communicate meaning clearly. Ultimately, of course, this is the only purpose of data visualization.

Reading more

W. E. B. Du Bois's Data Portraits: Visualizing Black America, Whitney Battle-Baptiste, Britt Rusert. This is the book that brought these superb visualizations to a broader audience. It includes a number of full-color plates showing the infographics in their full glory.

The Library of Congress has many more infographics from the Paris exhibition, it also has photos too. Take a look at it for yourself here https://www.loc.gov/collections/african-american-photographs-1900-paris-exposition/?c=150&sp=1&st=list - but note the charts are towards the end of the list. I took all my charts in this article from the Library of Congress site.

"W.E.B. Du Bois’ Visionary Infographics Come Together for the First Time in Full Color" article in the Smithsonian magazine that reviews the Battle-Baptiste book (above).

"W. E. B. Du Bois' Hand-Drawn Infographics of African-American Life (1900)" article in Public Domain Review that reviews the Battle-Baptiste book (above).